|

3/14/2023 0 Comments Online text extractor regex

Sentence tokenization can be done easily with sent_tokenize from nltk.tokenize as below. You may tokenize your dataset from documents into paragraphs or sentences, and then extract the paragraphs or sentences which contain the keywords. In the script above, the inputs are sentence tokens and the list of keywords stored in a text file. Read the text files which contain the text data and keywords # read sentences and extract only line which contain the keywords import pandas as pd import re # open file keyword = open('keyword.txt', 'r', encoding = 'utf-8').readlines() texts = open('sent_token.txt', 'r', encoding = 'utf-8').readlines() # define function to read file and remove next line symbol def read_file(file): texts = for word in file: text = word.rstrip('\n') texts.append(text) return texts # save to variable key = read_file(keyword) corpus = read_file(texts) The following highlighted sentence will be the output of text extraction with keywords ‘bar stool’ and ‘height’ġ. For example, you run an eCommerce that selling household products, you are curious about people feelings toward your new product, you may directly extract all the reviews containing the name or model of the new product.Īs an example, the eCommerce store introduces a bar stool with a different height from the past product and would like to know how their customers react. On another way round, this method is suitable if you are sure on what are the key matter you wish to study on.

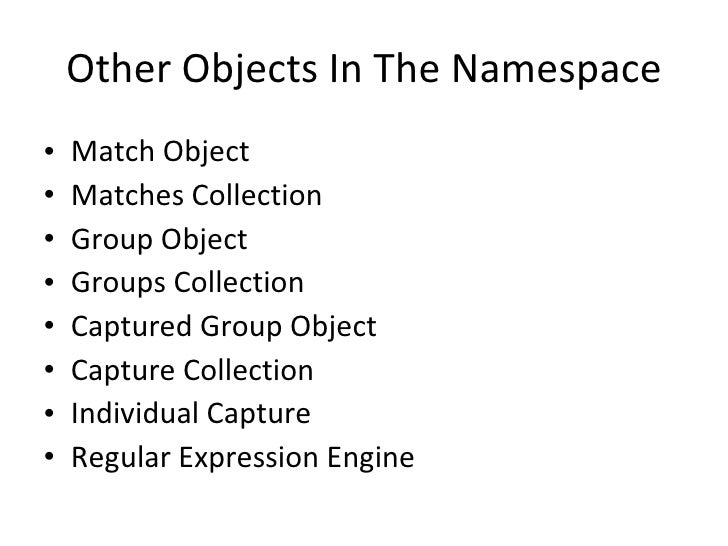

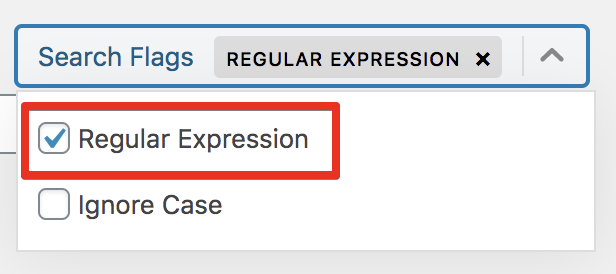

For example, if you are studying the overall sentiment of people toward a particular subject, object or event, extracting sentences might affect the accuracy of the overall sentiment. However, this method might not be suitable for every text analytics project. Extracting only the sentences containing keywords defined not only able to reduce the vocab size, but also make the model more precise according to my need. Also, I am not interested in every sentence in the datasets, hence text extraction is acting as a part of the data cleaning process for my project. Copy the extration model at TEXT2DATA user panelģ.For me, the text documents I used in my text analytics project is huge, which take a long time if I going to train the model on my device. To use the above models in our Excel Add-In or Google Sheets add-on, simply copy an model to your model list at your TEXT2DATA user panel and click "Extract" option on selected text in Excel or Google Sheets add-on.ġ. The list below contains description of all currently available extraction models at TEXT2DATA:Įxtracts basic entities: LOCATION, PERSON, ORGANIZATIONĮxtracts: LOCATION, PERSON, ORGANIZATION, MONEY, PERCENT, DATE, TIMEĮxtracts clean, easy to read text content from HTMLĮxtracts prices in following currencies: USD, EUR and GBPĮxtracts email/phone/address in one requestĪt TEXT2DATA we are constantly working on adding new models and improving existing ones, so keep checking for the updates. This functionality is available in user admin panel. In order to do that, the user only needs to select the model from the list and copy it to his own custom extraction models. TEXT2DATA service allows you to build your own custom extraction models using our online model builder tool.Īpart from that, our users can use our ready-to-use publicly available extraction models. The best technique for that is using Natural Language Processing (NLP) combined with custom regular expressions (Regex). Text extraction is the process of extracting pre-defined entities (types of information) from the text content.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed